Keep Blog

LLM-Wiki

Chatting with Obsidian, Hermes Agent, and Keep

Flows

Code Mode for Agent Memory

Benchmarking Keep with LoCoMo

76.2% on LoCoMo with local embedding/summarization models

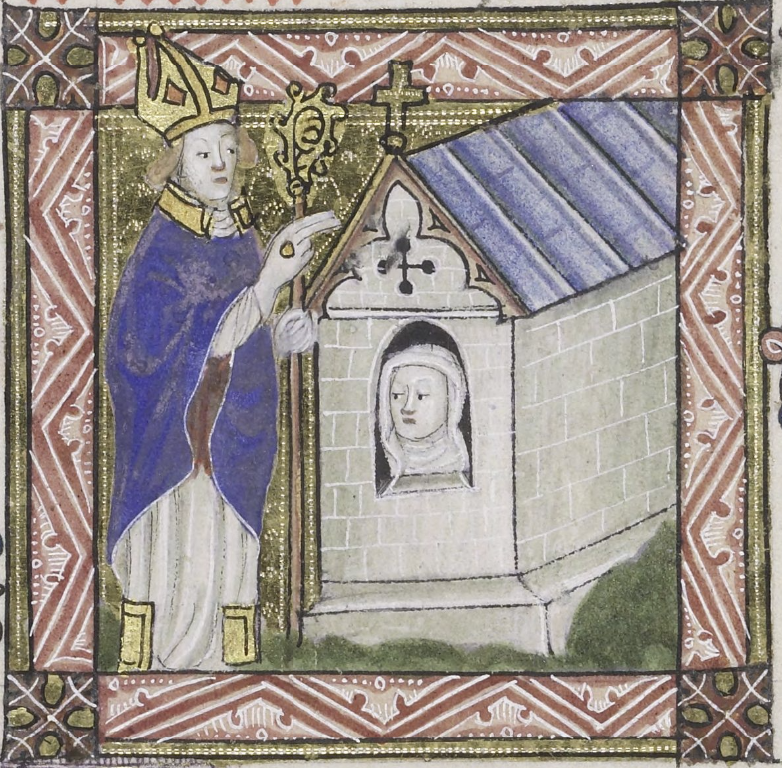

Reflection and Memory

LLM and memory as mirrors

Introducing Keep

Reflective Memory for AI Agents

Wisdom, or Prompt-Engineering? ↗

When the Singularity happened, we were sitting on a park bench in Berkeley.